Great creative work is messy on purpose. The best ideas start wide, chaotic, and a bit irrational—then get narrowed by constraints, data, and polish. Most “AI automation” flips that sequence: it starts narrow and stays narrow, producing clean-but-average outputs. The goal isn’t to replace the messy part. It’s to protect it—and move faster through the boring parts (brief formatting, cross-checks, compliance, links, UTMs, and handoffs).

This article lays out a pragmatic system to automate briefs and content QA without flattening your team’s taste or originality. You’ll get a repeatable workflow, concrete templates, and guardrails that keep the spark alive.

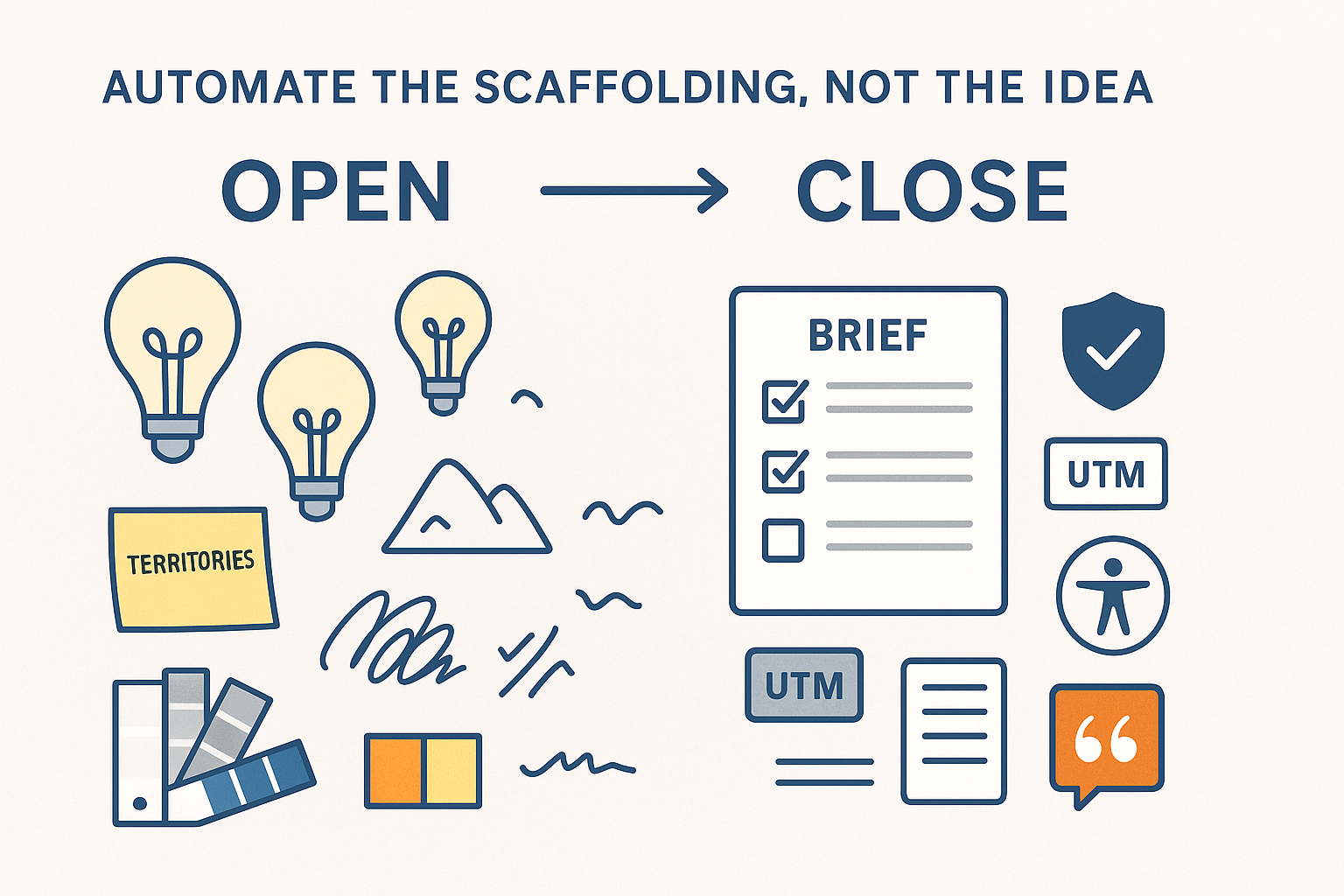

The Operating Principle: Open First, Close Later

Every creative cycle should oscillate between two modes:

- Open — divergence: explore territories, references, analogies, tone ranges, visual motifs. No scorecards yet.

- Close — convergence: pick, structure, justify, and de-risk. Heavy use of templates, retrieval, and QA.

Automation lives primarily in the close phase. Used in the open phase, it must be explicitly framed as provocation, not judgment.

What to Automate (and What to Leave Human)

Automate hard:

- Brief scaffolding (sections, required fields, links to canons and prior winners)

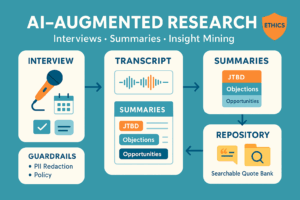

- Fact retrieval and claim validation with citations

- UTM and taxonomy checks

- Brand voice linting and banned-terms screening

- Accessibility and legal lines

- Versioning, change logs, and handoffs

Keep human:

- Territory selection (“what story are we telling?”)

- Taste decisions on tone, rhythm, visual tension

- Risk-reward calls (edginess vs brand posture)

- Final “does this feel true?” review

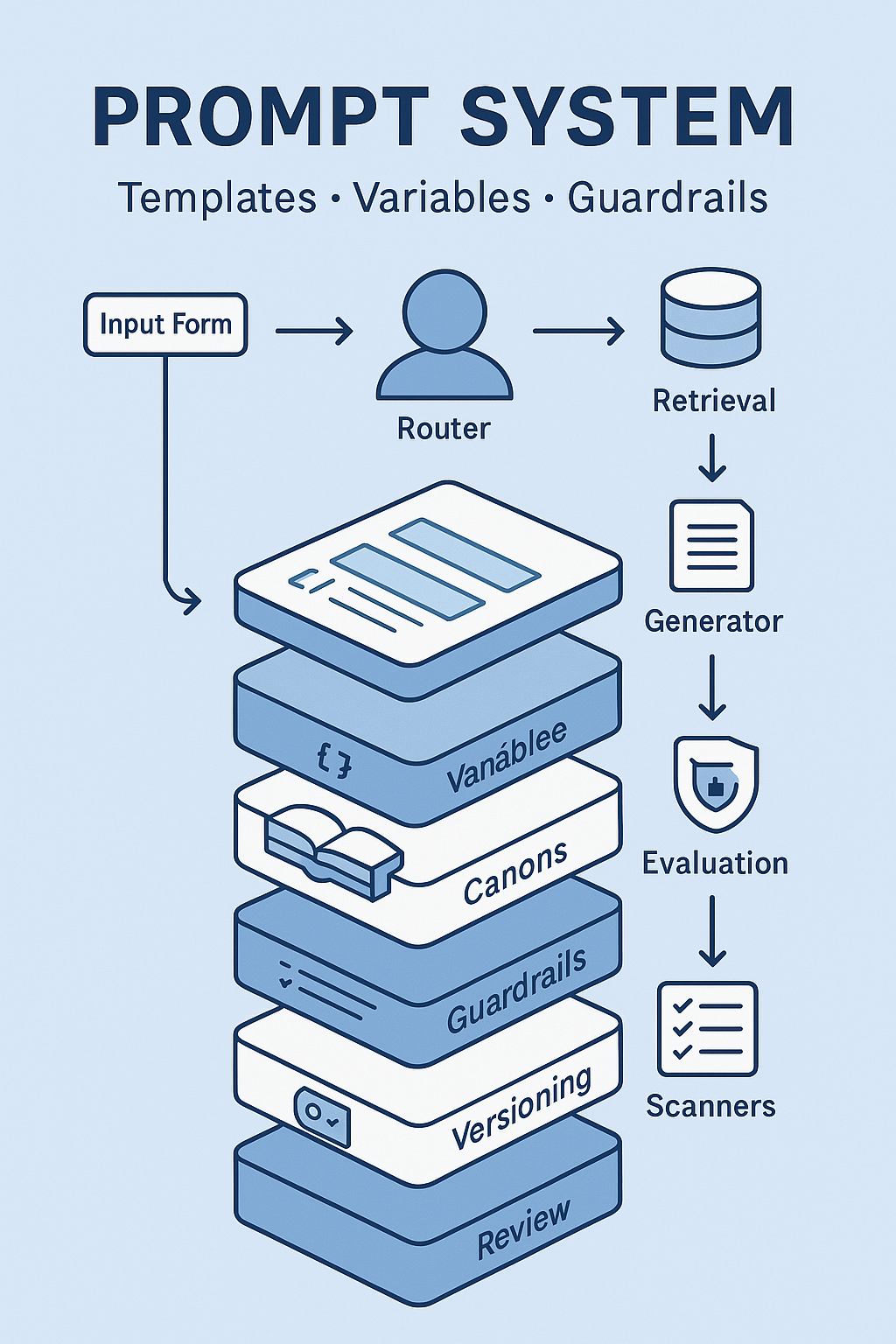

A Brief System That Doesn’t Suffocate Ideas

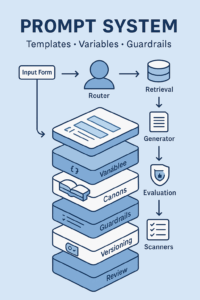

1) The Two-Layer Brief

Layer A — Strategy Canvas (human-authored):

- Problem reframed: one paragraph in plain language

- Audience JTBD: jobs, anxieties, switching costs

- Creative territories: 3 named bets with short references (not scripts)

- Non-negotiables: claims, disclaimers, constraints

Layer B — Production Scaffold (auto-filled):

- Message map (promise → reason to believe → proof)

- Offer and primary CTA options

- Channel mix (+ specs, aspect ratios)

- UTM plan (pre-generated strings)

- Checklists (accessibility, legal, platform quirks)

- Links to prior winners and canon sections

How to run it: Humans complete Layer A in a form. The system then pulls canons, case studies, and specs to auto-generate Layer B—without writing the idea for you.

2) Variable Inputs, Not Prose

Use typed fields instead of paragraphs. Example:

product: "Notes Pro"

audiences: ["Students","Consultants"]

goal: "lead_gen"

territories:

- name: "Focus Beats Features"

- name: "First-Draft Freedom"

- name: "From Chaos to Click"

must_claims: ["Local-first encryption", "10-day free trial"]

markets: ["US","UK"]

Typed variables keep automation on rails while leaving creative space in territories.

3) Evidence Packs

For each territory, the system compiles a 1-page evidence pack:

- Approved claims and sections from the Brand/Legal Canon

- 2–3 relevant case-study snippets (with links)

- Performance deltas from similar past work

- Platform do’s/don’ts for the formats you picked

Writers and designers now explore with real constraints—without the “make up a proof” pressure.

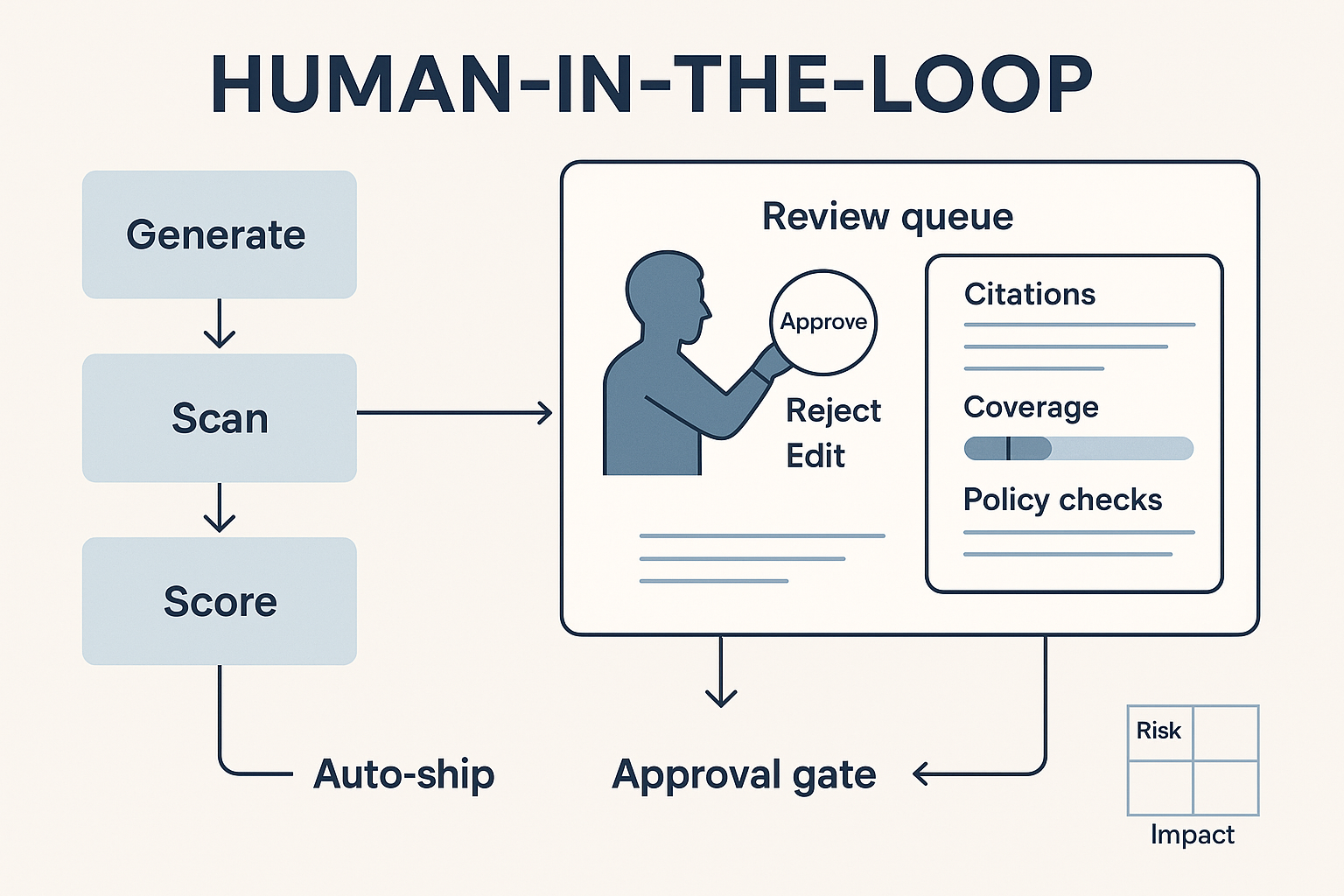

QA That Improves Work Instead of Sanding It Down

Think of QA as assistive diagnostics, not a gatekeeper.

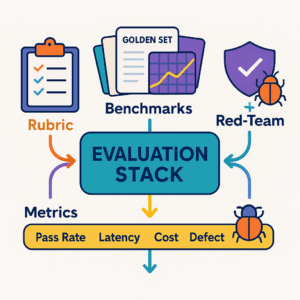

Automated checks (blocking):

- Banned terms, competitor names, and risky superlatives

- Missing or broken UTMs; naming conventions

- Required disclaimers per market

- Contrast ratios and alt-text presence

- Facts without citations to your canon

Automated checks (advisory):

- Readability alerts (where density kills rhythm)

- Overused patterns (e.g., 3 bullets + emoji + generic CTA)

- Voice drift vs brand tone (report, don’t rewrite)

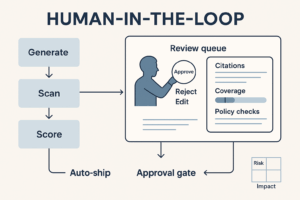

Output format: a single diff.

- Left: original copy or layout notes

- Right: suggested edits with inline citations

- Footer: checklist results + confidence/coverage

No red squiggles across three tools. One diff, one link.

The Workflow (End-to-End)

- Kickoff (Open) — humans define territories and upload references (moodboards, film stills, lines).

- Brief Build (Close) — system pulls specs, UTMs, claims, and prior winners to scaffold the brief around those territories.

- Concepting (Open) — writers/designers explore; no scoring beyond basic safety.

- QA Pass (Close) — run the diff: claims matched, tone linted, links checked, accessibility verified.

- Creative Review (Open) — creative lead decides what tension to keep; not every “issue” gets fixed if it’s purposeful.

- Finalization (Close) — publish assets + brief, lock versions, ship.

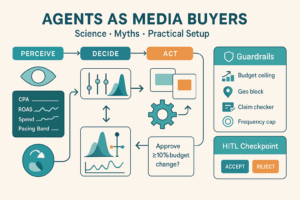

Guardrails that Preserve Originality

- Policy lives in the system prompt, not in Slack memory: no invented numbers; cite canon sections; never imply endorsements; separate facts from metaphors.

- Retrieval-first: model must fetch approved claims before it can write proof lines.

- Advisory vs blocking: Only policy and safety are blocking. Voice and rhythm are advisory with examples.

- Diversity quota in the open phase: at least one concept must challenge house style each cycle.

- Human veto codified: a checkbox—“Keep deliberate tension here (explain why)”—overrides style suggestions and logs rationale.

Templates You Can Copy

A) Brief (Markdown export)

# Campaign Brief — {{product}} — {{markets}}

## 1) Problem (plain)

{{human.problem}}

## 2) Audience JTBD

- Job: …

- Anxieties: …

- Triggers: …

## 3) Creative Territories

1) {{territory_1_name}} — 2–3 reference links

2) {{territory_2_name}}

3) {{territory_3_name}}

## 4) Message Map

Promise → RTB → Proof (citations)

## 5) Offer & CTAs

Primary / Secondary

## 6) Channels & Specs

Platform → formats → aspect ratios → length

## 7) UTM Plan (auto)

{{generated.utm_table}}

## 8) Checklists

- Accessibility: pass/fail items

- Legal: required lines per market

- Platform quirks: pass/fail

## 9) Prior Winners

Links + notes

B) QA Diff (for copy)

# QA Diff — {{asset_name}}

## Summary

- Policy: PASS

- Claims: 1 fix needed (cite Case #41)

- Accessibility: Alt text missing on hero

## Diff

- Original: “The fastest ever note app…”

- Suggestion: “Faster note capture in meetings” [Brand Canon §3.2]

Reason: Avoid unverifiable superlative; use approved benefit/claim.

## Links

- UTM check: ✓

- KPI dictionary: “Activation” link

- Disclaimers (US/UK): added

Metrics That Matter

- Time to first draft (brief + first creative): target −50–70%

- QA rework rate: target −30% edits before sign-off

- Citation completeness: ≥95% of claims with canon links

- Accessibility pass rate: ≥98% at launch

- Diversity of territories: at least one “out-of-pattern” concept per cycle

- Stakeholder confidence: weekly survey on clarity of briefs and usefulness of QA diffs

Anti-Patterns (Avoid These)

- Mega-prompts that write the whole idea. You’ll converge to the mean.

- Free-text briefs. Ambiguity in → revisions out.

- QA as taste police. If it rewrites voice by default, you’ll lose edge.

- No retrieval/citations. That’s how invented numbers sneak in.

- Copying yesterday’s winner. Bake exploration into the system or stagnation follows.

30/60/90 Rollout

Days 1–30 (Foundation)

- Implement two-layer brief template + typed inputs

- Wire retrieval to Brand/Legal/Performance Canons

- Ship QA diff with claim matcher, UTM checker, accessibility scan

Days 31–60 (Pilot)

- Run 3 cycles across one product line

- Log time saved, edit counts, and reviewer feedback

- Add “human veto” and diversity quota logic

Days 61–90 (Scale)

- Add role-specific variants (video, long-form, display)

- Publish SOPs + change-log; integrate with CMS/MRM

- Turn on automated versioning and release notes

Conclusion

Automation shouldn’t write your ideas—it should protect them. By separating Open (human exploration) from Close (structured automation), you get the best of both worlds: faster briefs, cleaner QA, and work that still has teeth. Templates and guardrails remove grind and risk; territories and human veto keep edge and surprise. Ship the system, not just a prompt.

Add comment