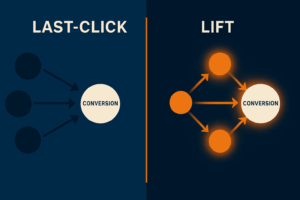

Marketing platforms love to take credit. Dashboards show flawless ROAS, last-click reports tell a comforting story, and lift studies conveniently appear only when they favor the platform. But executives don’t want pretty numbers — they want to know what would have happened without the ads.

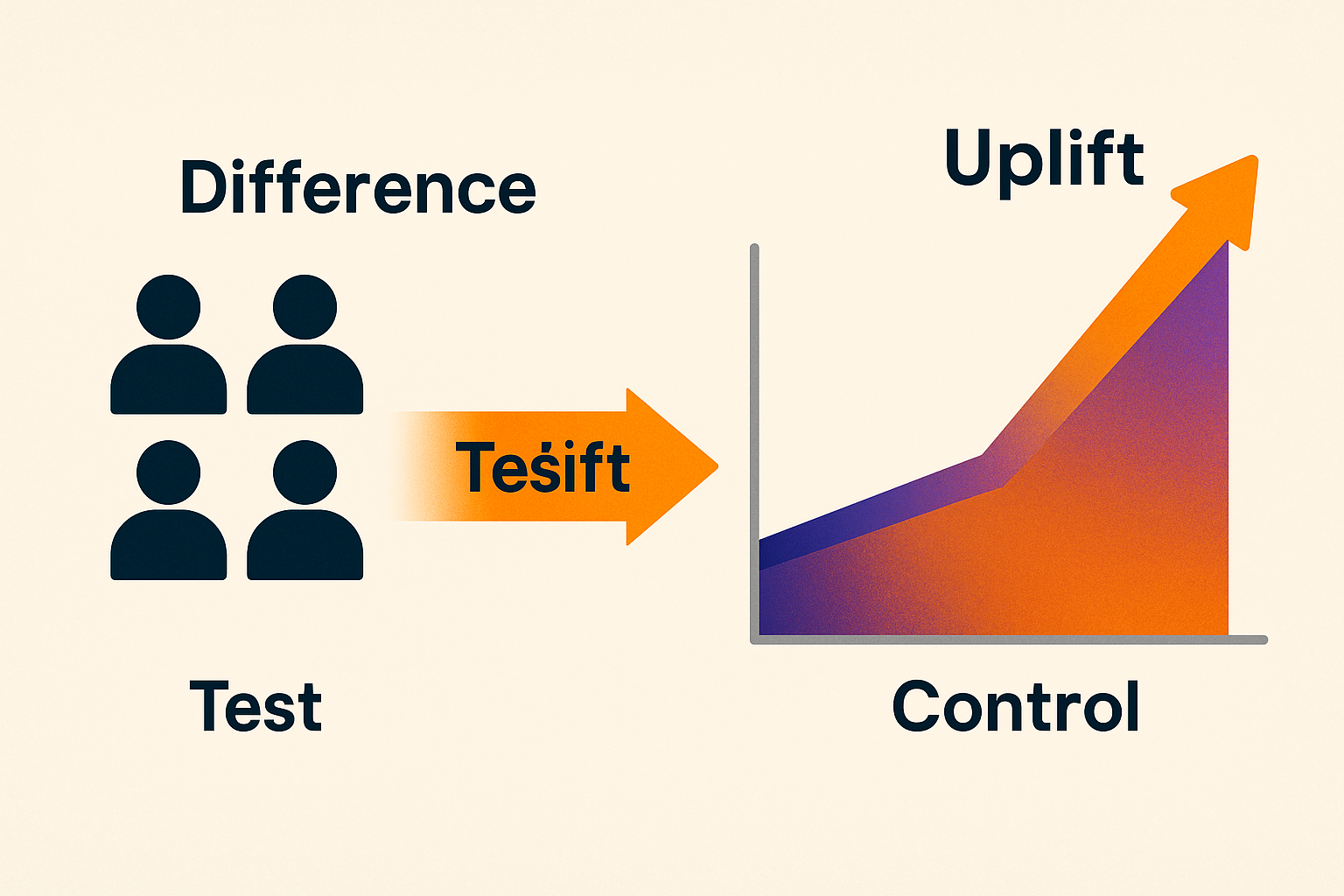

That’s the essence of incrementality: the additional value generated by advertising beyond what would have occurred organically.

The good news? You don’t need a data science team to measure it. With clear experiment design, disciplined execution, and a few simple statistical rules, any marketing team can run valid incrementality tests in one to two weeks.

What Incrementality Really Means

At its simplest: Incrementality=OutcomeExposed−OutcomeControlIncrementality = Outcome_{Exposed} — Outcome_{Control}Incrementality=OutcomeExposed−OutcomeControl

If the difference is positive and statistically significant, the channel is driving true incremental value. If not, your campaign may just be cannibalizing organic demand.

Four Test Designs You Can Run Without Data Scientists

1. Geo Holdouts (Regional Testing)

Best for: large budgets, national or multi-city advertisers.

How it works:

- Split your geography into comparable clusters (e.g., similar cities or regions).

- Randomly assign half as test (ads on) and half as control (ads off).

- Run the campaign only in test regions.

- Compare normalized revenue or conversions vs. baseline.

Pros: Works even for offline sales; no PII needed.

Cons: Requires budget and time; some “spillover” between markets is inevitable.

2. Switchback Tests (Time-Based)

Best for: concentrated audiences, limited geographies.

How it works:

- Define a test period (e.g., 6 weeks).

- Alternate ON and OFF periods by week or day.

- Track spend, conversions, and revenue for each block.

- Compare average ON vs. OFF performance.

Pros: Simple to implement, great for high-reach channels.

Cons: Sensitive to seasonality and external events; requires disciplined scheduling.

3. User Holdouts (Audience Splits)

Best for: logged-in environments, email subscribers, loyalty apps.

How it works:

- Randomly split users into test (see ads or emails) and control (no exposure).

- Exclude control users from campaign targeting.

- Compare conversion rates and revenue per user.

Pros: Cleanest design, directly tied to individuals.

Cons: Requires strict targeting discipline; small leaks can invalidate results.

4. Platform Lift Tests

Best for: quick wins on Meta, Google, YouTube, LinkedIn.

How it works:

- Use built-in brand or conversion lift tests.

- Document design: audience, period, creatives, and metrics.

- Validate platform results against your own business KPIs.

Pros: Fast setup, randomization handled by the platform.

Cons: Methodology is opaque; results should be sanity-checked.

Minimal Statistics for Marketers

You don’t need regression models — just three simple steps:

- Calculate Uplift

Uplift=ConversionsTestUsersTest−ConversionsControlUsersControlUplift = \frac{Conversions_{Test}}{Users_{Test}} — \frac{Conversions_{Control}}{Users_{Control}}Uplift=UsersTestConversionsTest−UsersControlConversionsControl

- Relative Lift (%)

Lift%=UpliftConversionControl×100Lift\% = \frac{Uplift}{Conversion_{Control}} \times 100Lift%=ConversionControlUplift×100

- Check Significance

If each group has ≥300 conversions, approximate standard error is:

SE=pt(1−pt)nt+pc(1−pc)ncSE = \sqrt{\frac{p_t (1-p_t)}{n_t} + \frac{p_c (1-p_c)}{n_c}}SE=ntpt(1−pt)+ncpc(1−pc)

Result is significant if |Uplift| > 1.96 × SE.

Rule of thumb: if lift is <5% and variance is high, run a longer or larger test.

A 14-Day Launch Plan

- Days 1–2: Define hypothesis.

- Days 3–4: Choose design (geo, switchback, user, or platform).

- Days 5–6: Define success metrics (conversions, revenue per user).

- Days 7–12: Launch and monitor. Control exposure carefully.

- Days 13–14: Analyze and decide: scale, improve creative, or stop.

What Success Looks Like

- Incremental CPA (iCPA)

iCPA=SpendIncrementalConversionsiCPA = \frac{Spend}{Incremental Conversions}iCPA=IncrementalConversionsSpend

- Incremental ROAS (iROAS)

iROAS=IncrementalRevenueSpendiROAS = \frac{Incremental Revenue}{Spend}iROAS=SpendIncrementalRevenue

If iROAS > 1 (or above your margin threshold), scale the campaign.

Common Pitfalls (and How to Avoid Them)

- No real control group.

- Changing creatives mid-test.

- Over-relying on last-click attribution.

- Too few conversions (<300 per group).

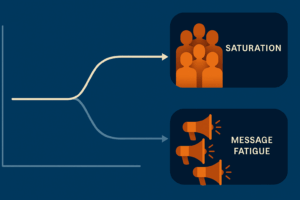

- Excessive frequency, leading to wasted spend.

Case Snapshots

- B2B SaaS: LinkedIn switchback test → +14% incremental demos, iCPA -18%.

- E-commerce: Geo holdout in 10 cities → +8% incremental sales, but iROAS <1 until creative was optimized.

- Mobile App: User holdout → 0% lift at frequency 7+. After capping at 3, lift jumped to +9%.

Final Thought

Incrementality testing is not a luxury — it’s a discipline. With the right test design, basic statistics, and strict execution, any marketing team can measure true additional value and stop confusing attribution with causation.

Remember: incrementality first, scaling second. That’s how budgets stop being noisy, and growth becomes predictable.

Add comment